PGO: The Postgres Operator from Crunchy Data

Run Cloud Native PostgreSQL on Kubernetes with PGO: The Postgres Operator from Crunchy Data!

Latest Release: 4.7.9

PGO, the Postgres Operator developed by Crunchy Data and included in Crunchy PostgreSQL for Kubernetes, automates and simplifies deploying and managing open source PostgreSQL clusters on Kubernetes.

Whether you need to get a simple Postgres cluster up and running, need to deploy a high availability, fault tolerant cluster in production, or are running your own database-as-a-service, the PostgreSQL Operator provides the essential features you need to keep your cloud native Postgres clusters healthy, including:

Postgres Cluster Provisioning

Create, Scale, & Delete PostgreSQL clusters with ease, while fully customizing your Pods and PostgreSQL configuration!

High Availability

Safe, automated failover backed by a distributed consensus based high-availability solution. Uses Pod Anti-Affinity to help resiliency; you can configure how aggressive this can be! Failed primaries automatically heal, allowing for faster recovery time.

Support for standby PostgreSQL clusters that work both within an across multiple Kubernetes clusters.

Disaster Recovery

Backups and restores leverage the open source pgBackRest utility and includes support for full, incremental, and differential backups as well as efficient delta restores. Set how long you want your backups retained for. Works great with very large databases!

TLS

Secure communication between your applications and data servers by enabling TLS for your PostgreSQL servers, including the ability to enforce all of your connections to use TLS.

Monitoring

Track the health of your PostgreSQL clusters using the open source pgMonitor library.

PostgreSQL User Management

Quickly add and remove users from your PostgreSQL clusters with powerful commands. Manage password expiration policies or use your preferred PostgreSQL authentication scheme.

Upgrade Management

Safely apply PostgreSQL updates with minimal availability impact to your PostgreSQL clusters.

Advanced Replication Support

Choose between asynchronous replication and synchronous replication for workloads that are sensitive to losing transactions.

Clone

Create new clusters from your existing clusters or backups with pgo create cluster --restore-from.

Connection Pooling

Use pgBouncer for connection pooling.

Affinity and Tolerations

Have your PostgreSQL clusters deployed to Kubernetes Nodes of your preference with node affinity, or designate which nodes Kubernetes can schedule PostgreSQL instances to with tolerations.

Scheduled Backups

Choose the type of backup (full, incremental, differential) and how frequently you want it to occur on each PostgreSQL cluster.

Backup to S3 or GCS

Store your backups in Amazon S3, any object storage system that supports the S3 protocol, or GCS. The PostgreSQL Operator can backup, restore, and create new clusters from these backups.

Multi-Namespace Support

You can control how PGO, the Postgres Operator, leverages Kubernetes Namespaces with several different deployment models:

- Deploy PGO and all PostgreSQL clusters to the same namespace

- Deploy PGO to one namespaces, and all PostgreSQL clusters to a different namespace

- Deploy PGO to one namespace, and have your PostgreSQL clusters managed across multiple namespaces

- Dynamically add and remove namespaces managed by the PostgreSQL Operator using

the

pgoclient to runpgo create namespaceandpgo delete namespace

Full Customizability

The Postgres Operator (PGO) makes it easy to get Postgres up and running on Kubernetes-enabled platforms, but we know that there are further customizations that you can make. As such, PGO allows you to further customize your deployments, including:

- Selecting different storage classes for your primary, replica, and backup storage

- Select your own container resources class for each PostgreSQL cluster deployment; differentiate between resources applied for primary and replica clusters!

- Use your own container image repository, including support

imagePullSecretsand private repositories - Customize your PostgreSQL configuration

- Bring your own trusted certificate authority (CA) for use with the Operator API server

- Override your PostgreSQL configuration for each cluster

How it Works

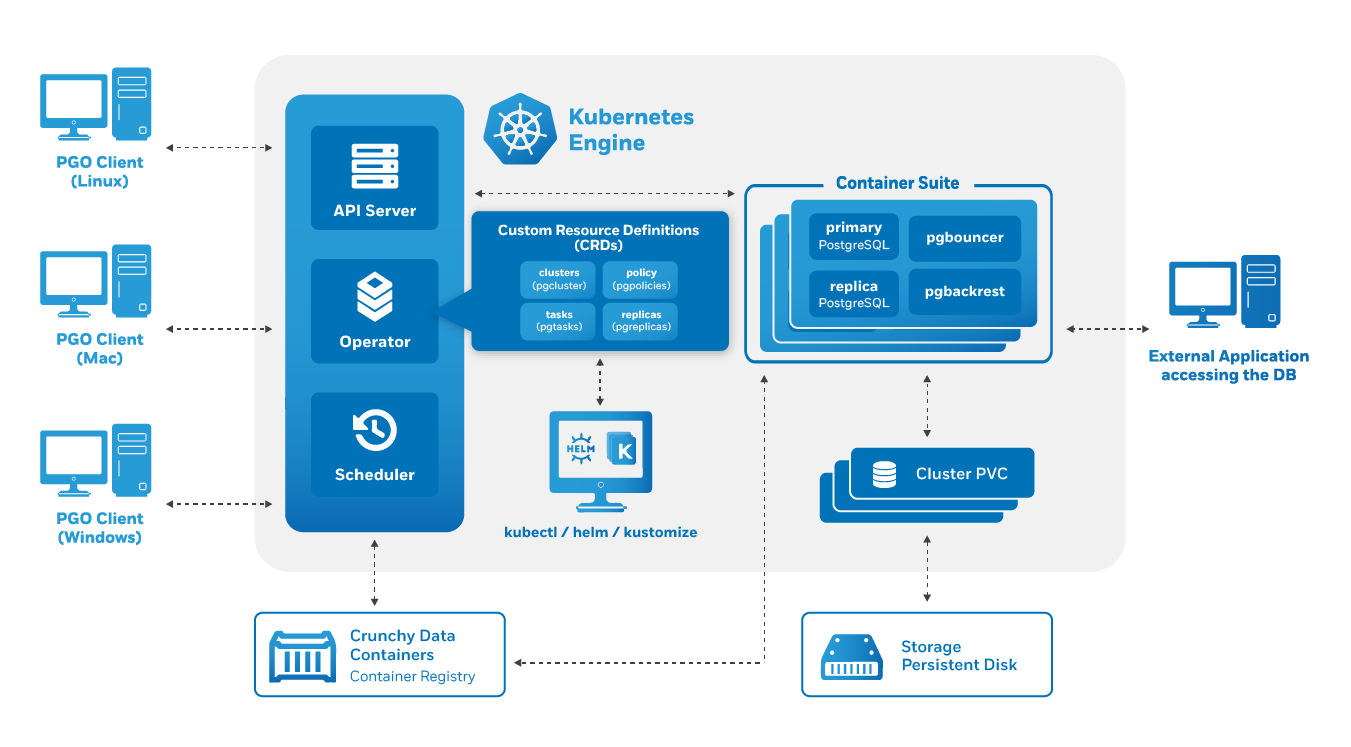

The Crunchy PostgreSQL Operator extends Kubernetes to provide a higher-level abstraction for rapid creation and management of PostgreSQL clusters. The Crunchy PostgreSQL Operator leverages a Kubernetes concept referred to as “Custom Resources” to create several custom resource definitions (CRDs) that allow for the management of PostgreSQL clusters.

Included Components

PostgreSQL containers deployed with the PostgreSQL Operator include the following components:

In addition to the above, the geospatially enhanced PostgreSQL + PostGIS container adds the following components:

PostgreSQL Operator Monitoring uses the following components:

Additional containers that are not directly integrated with the PostgreSQL Operator but can work alongside it include:

For more information about which versions of the PostgreSQL Operator include which components, please visit the compatibility section of the documentation.

Supported Platforms

PGO, the Postgres Operator, is Kubernetes-native and maintains backwards compatibility to Kubernetes 1.11 and is tested is tested against the following platforms:

- Kubernetes 1.17+

- Openshift 4.4+

- OpenShift 3.11

- Google Kubernetes Engine (GKE), including Anthos

- Amazon EKS

- Microsoft AKS

- VMware Tanzu

This list only includes the platforms that the Postgres Operator is specifically tested on as part of the release process: PGO works on other Kubernetes distributions as well.

Storage

PGO, the Postgres Operator, is tested with a variety of different types of Kubernetes storage and Storage Classes, as well as hostPath and NFS.

We know there are a variety of different types of Storage Classes available for Kubernetes and we do our best to test each one, but due to the breadth of this area we are unable to verify Postgres Operator functionality in each one. With that said, the PostgreSQL Operator is designed to be storage class agnostic and has been demonstrated to work with additional Storage Classes.

The PGO Postgres Operator project source code is available subject to the Apache 2.0 license with the PGO logo and branding assets covered by our trademark guidelines.